Return Quaternion(dot(u, half), cross(u, half)) Return Quaternion(0, normalized(orthogonal(u))) 180 degree rotation around any orthogonal vector in this case will be (0, 0, 0), which cannot be normalized.

Unfortunately, we have to check for when u = -v, as u + v It is important that the inputs are of equal length when Quaternion get_rotation_between(Vector3 u, Vector3 v) the arguments are _not_ axis and angle, but rather the

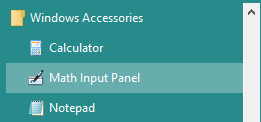

Math input panel vectors not working code#

Pseudo code follows (obviously, in reality the special case would have to account for floating point inaccuracies - probably by checking the dot products against some threshold rather than an absolute value).Īlso note that there is no special case when u = v (the identity quaternion is produced - check and see for yourself). This is done by taking the normalized cross product of u with any other vector not parallel to u. This is expected, given the infinitely many "shortest arc" rotations which can take us from u to v, and we must simply rotate by 180 degrees around any vector orthogonal to u (or v) as our special-case solution. There is a special case, where u = -v and a unique half-way vector becomes impossible to calculate. One solution is to compute a vector half-way between u and v, and use the dot and cross product of u and the half-way vector to construct a quaternion representing a rotation of twice the angle between u and the half-way vector, which takes us all the way to v! Seeing as a rotation from u to v can be achieved by rotating by theta (the angle between the vectors) around the perpendicular vector, it looks as though we can directly construct a quaternion representing such a rotation from the results of the dot and cross products however, as it stands, theta = angle / 2, which means that doing so would result in twice the desired rotation. Given that we can construct a quaternion representing a rotation around an axis like so: q.w = cos(angle / 2)Īnd that the dot and cross product of two normalized vectors are: dot = cos(theta)

Math input panel vectors not working free#

With this motive, inspired by human cognitive abilities of comprehending and reasoning answers when given a set of facts, this paper proposes a novel model architecture to model VQA as a factoid question answering problem, leveraging state-of-the-art deep learning techniques for reasoning and inferring answers to free form questions, in an attempt of improving the state-of-art in open ended visual question answering.I came up with the solution that I believe Imbrondir was trying to present (albeit with a minor mistake, which was probably why sinisterchipmunk had trouble verifying it). Inspired by the aforementioned challenges involved, this paper is aimed at answering free form and open ended natural language questions, not limited to visual context of an image, but external world knowledge as well. Though recently, research has been driven towards tackling external knowledge based VQA as well, there is significant room for improvement as limited studies exist in this area. However, though recent works have achieved significant improvement over state-of-art models for answering questions that are answerable by solely referring to the visual context of the image, such models are often limited, being incapable of tackling questions involving external world knowledge beyond the visible contents. With recent advancements in machine perception and scene understanding, Visual Question Answering (VQA) has garnered much attraction from researchers in the direction of training neural models for jointly analyzing, grounding and reasoning over the multi-modal space of image visual context and natural language in order to answer natural language questions pertaining to the image contents.